Red Hat Research Quarterly

Highlights from this issue

Red Hat Research Quarterly

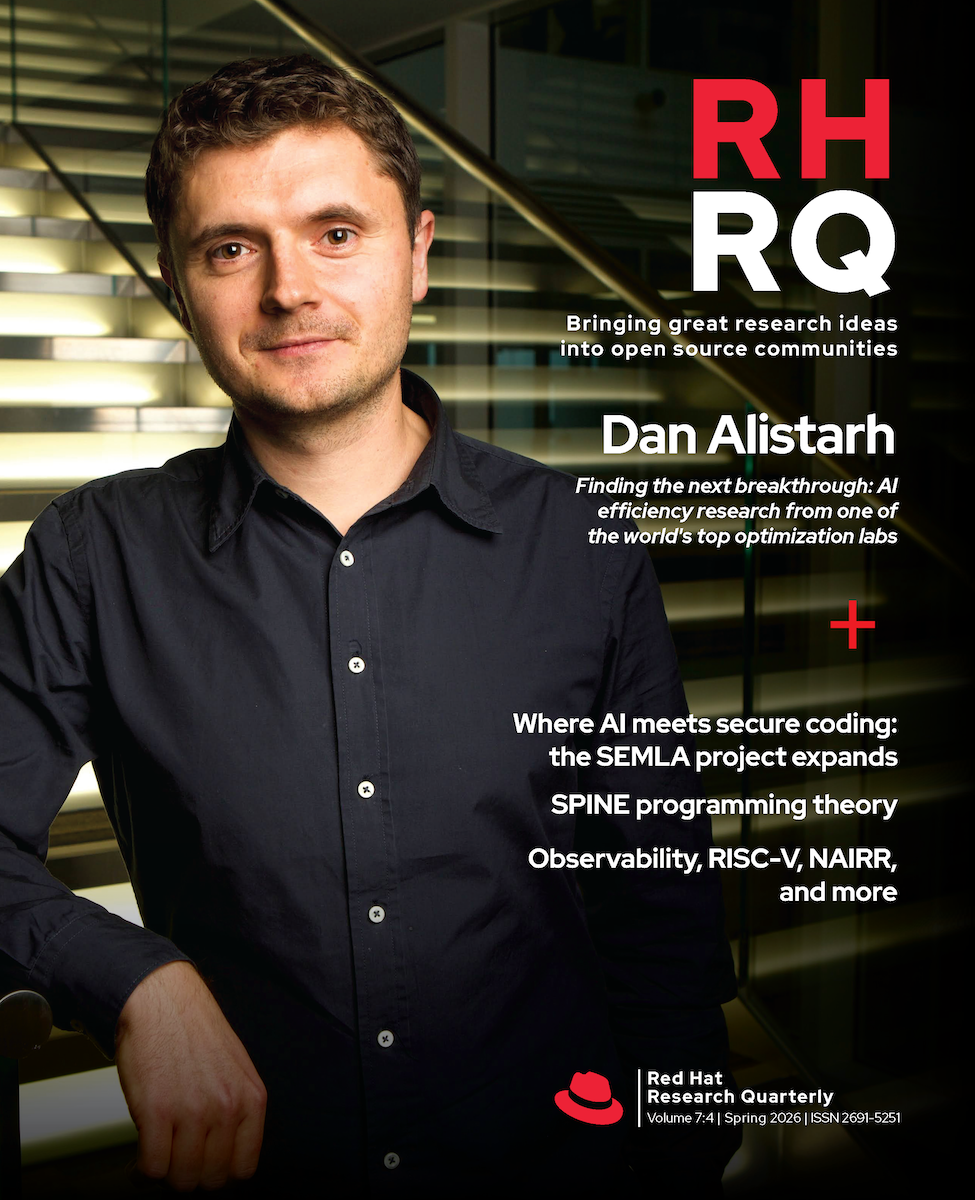

Finding the next breakthrough: AI efficiency research from one of the world’s top optimization labs

Nir Shavitz interviews ISTA professor Dan Alistarh on quantization, sparsity, and the next frontiers in efficiency research.

Volume 7, Issue 4 • ISSN 2691-5278

Departments

Features

Inside this issue

RISC-V is an increasingly prevalent hardware architecture in embedded systems, and it’s beginning to serve as the base architecture for many new AI accelerators. It is an instruction set architecture (ISA) with roots similar to Arm and other RISC-based architectures, but with a key difference: RISC-V is developed by a community-based standards organization, RISC-V International, […]

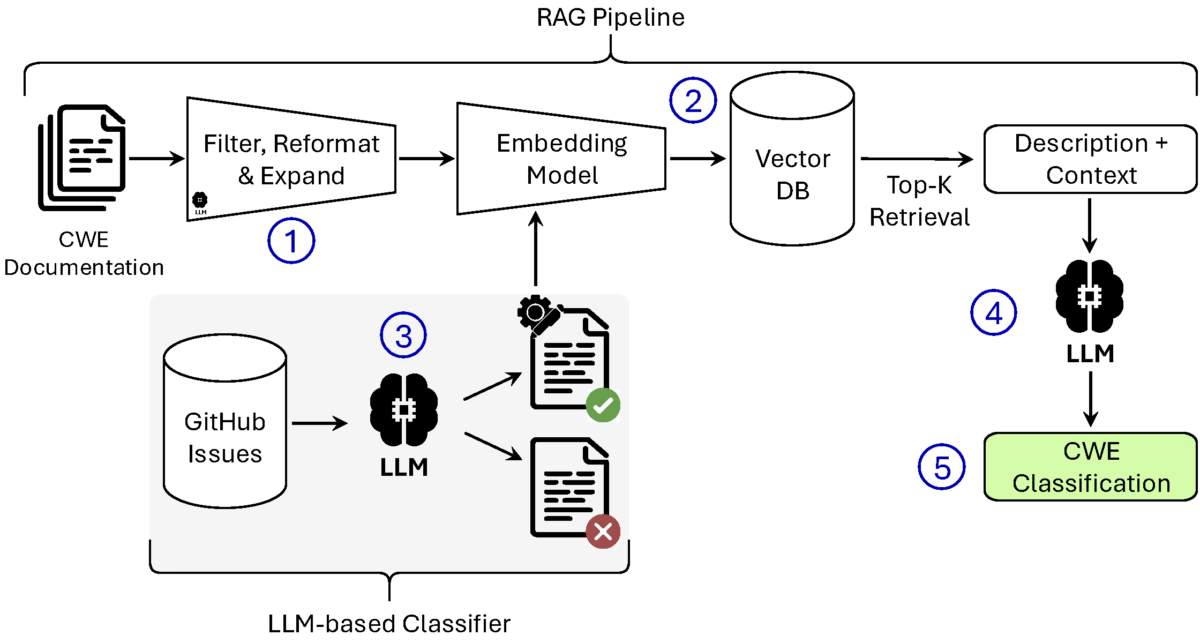

The industry-academia collaboration aimed at using LLMs to help generate more secure code builds on its success to expand research into infrastructure. In an era when software underpins everything from critical communications and global financial systems to lifesaving medical devices, security and reliability can never be an afterthought. Yet traditional development practices often leave gaps: […]

A shared national AI research infrastructure may be coming to a galaxy not so far away. Human time scales are slow—really slow. In the time it takes to type that sentence, one of the H100 GPUs powering a nearby academic datacenter has roughly 10 billion cycles to consider its place in the universe. Of course, […]

Dan Alistarh is a humble guy. The ISTA (Institute of Science and Technology, Austria) professor and founding employee of Neural Magic (the startup Red Hat acquired by Red Hat in 2025) isn’t one to brag, but fortunately we called in his former postdoc advisor, MIT professor and Neural Magic cofounder Nir Shavit, to really draw […]

More metrics and more dashboards mean more ways for researchers to identify actionable improvements. Optimizing the performance, stability, and resource utilization of large language model (LLM) deployments is a challenge for both users and cluster administrators. The Mass Open Cloud (MOC) now supports the ability to collect inference performance metrics for LLMs deployed in our […]

Often in RHRQ, we look at the work of people discovering new frontiers in technology, but what I hope makes us different from other technology journals is that we’re also asking how open source and open practices can make these discoveries accessible to people in the real world. This issue’s interview spotlights Nir Shavit and […]

Developers using SPINE Programming have drastically cut manual coding time while maintaining full control over their data. SPINE Programming Theory (SPT) is a form of on-device, local AI code indexing and generation that accelerates software development while ensuring that users maintain full control over their data in their own environment. SPT allows developers to focus […]