Red Hat Research Quarterly

Finding the next breakthrough: AI efficiency research from one of the world’s top optimization labs

Red Hat Research Quarterly

Finding the next breakthrough: AI efficiency research from one of the world’s top optimization labs

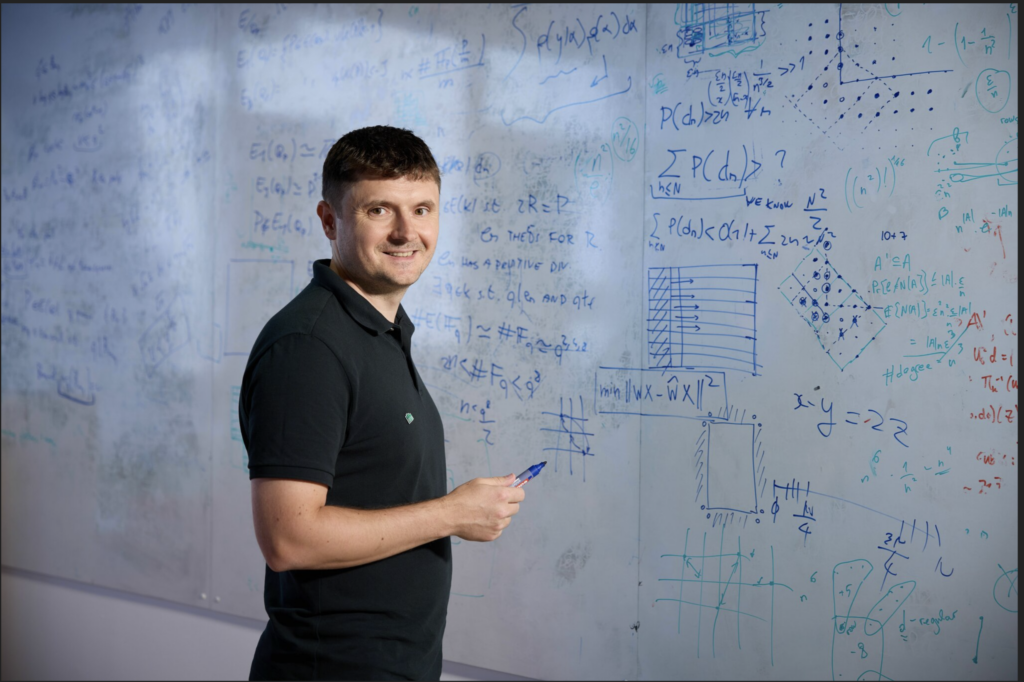

Dan Alistarh is a humble guy. The ISTA (Institute of Science and Technology, Austria) professor and founding employee of Neural Magic (the startup Red Hat acquired by Red Hat in 2025) isn’t one to brag, but fortunately we called in his former postdoc advisor, MIT professor and Neural Magic cofounder Nir Shavit, to really draw […]

Article featured in

Dan Alistarh is a humble guy. The ISTA (Institute of Science and Technology, Austria) professor and founding employee of Neural Magic (the startup Red Hat acquired by Red Hat in 2025) isn’t one to brag, but fortunately we called in his former postdoc advisor, MIT professor and Neural Magic cofounder Nir Shavit, to really draw out the magnitude of his impact on AI efficiency. Their long-standing rapport turns this interview into a masterclass on strategies for attacking persistent bottlenecks. Dan’s home institution, ISTA, is a long-time Red Hat Research collaborator that recently joined the Red Hat Office of the CTO’s AI University Partnerships program. This new phase of collaboration is aimed at driving further AI efficiency and performance optimizations in vLLM. Nir and Dan dive into the engineering required to run trillion-parameter models on standard hardware, focusing on post-training quantization (GPTQ), sparsity, and clearing the path for massive open source LLMs. If you’re looking for a deeper perspective on the next frontier of model optimization and AI efficiency, keep reading.—Shaun Strohmer, ed.

Nir Shavit: Remind me about your background—how you got into computing and eventually into machine learning and performance engineering.

Dan Alistarh: I always wanted to do math—my mom is a math professor. I come from Romania, where math competitions are a big thing, and I got very into it. I felt I needed to keep going after high school, and I went to this elite unit at a university in Bucharest where you only got in if you had medals in math or computer science competitions. Once I got there, I saw I wasn’t that good in math compared to my peers.

Nir Shavit: You always say those things.

Dan Alistarh: No, I’m not being modest! But I found my niche, and I was lucky to transfer to Carnegie Mellon University for my last year of college. That changed my perspective on how broad and how deep computer science is. Going into these very hard classes on compilers, operating systems, and ML really changed the way I saw it.

My bachelor’s thesis advisor was a good friend of (deep learning and neural networks pioneer) Yoshua Bengio. When I applied for grad school, the first offer I got was from the University of Montreal and his group. This was around 2005-2006, before the whole deep learning revolution. For some people at CMU, neural networks were basically taught as a cute idea that doesn’t really work. Bengio had these interesting but kooky-sounding projects—it all sounded very fishy back then. In the end, I decided to go back to Europe for graduate school and put aside ML. That’s how I ended up in Rachid Guerraoui’s lab at EPFL (in Lausanne, Switzerland) working on the theory of distributed computing.

Nir Shavit: So you went to Rachid’s, you did distributed computing, and you became an expert in specific parts of distributed computing. What were you interested in?

Dan Alistarh: My thesis was about lower bounds for the efficiency of certain concurrent objects, proving tight upper and lower bounds for how fast you could build synchronization objects like a queue or mutex. It was very theoretical and deterministic, very much in classical algorithms—a big departure from ML and AI.

Nir Shavit: Then you graduated, we met, and I loved your work, right? We decided we would love to have you at MIT, and you came for a postdoc, still in that area of distributed computing.

Dan Alistarh: Exactly. Pretty much my whole postdoc was about extensions: stochastic extensions, randomized extensions. The real step towards ML was when I moved to Microsoft Research, which was the powerhouse research lab at that time. They had world-class scientists and cutting-edge research problems, but they also forced you to be more practical and gave you more contact with real-world problems. I attended a talk by someone from the speech group; they were trying to train neural networks across multiple GPUs. They had large speech datasets and so much data they actually had to distribute it. It was very much the beginnings of this area, and they had synchronization problems and they had communication problems.

Nir Shavit: So you went into the ML world to solve communication problems?

Dan Alistarh: Exactly. It’s interesting how life sets you up for these things: I was one of the few people who had a bit of both: I could understand what this algorithm was trying to do and also the concurrency or distribution bottleneck that’s happening.

Nir Shavit: When you look at the problems you were trying to solve then versus where we are now, throwing hardware and software at this exact same problem, how would you say it evolved?

Dan Alistarh: Well, the models I train today on my personal workstation are at least a hundred times larger than what Microsoft was using its whole GPU capacity to train 12 years ago.

Nir Shavit: And the problem still hasn’t gone away. Your first papers were about understanding how to make this communication effective, and here we are today and everybody is facing the same set of problems, only much bigger.

Dan Alistarh: There, the basic issue was they had a bunch of GPUs that were powerful for what they were supposed to do, but every time they needed to send data between the GPUs, it slowed down by a hundred times, so it’s maybe a thousand times more expensive. How do you get around this? We came up with some better algorithms to dramatically decrease the size of the communication payload between GPUs.

This became a problem NVIDIA threw hardware at. It’s slightly less of a problem for today’s models because NVIDIA realized that bandwidth is a big problem. So they bought Mellanox and then put in InfiniBand, and their GPUs have massive bandwidth at this point. But with the scale of GPT models you still get this issue. The problems we’re dealing with now are similar: we no longer have to compress gradients being sent between two GPUs in most cases, but, for example, we get bottlenecks when reading models weights from main memory into GPU memory.

Optimization, quantization, and sparsity

Nir Shavit: So you evolved in the ML space, and you were still doing distributed computing as well. What happened next?

Dan Alistarh: What happened next was this company called Neural Magic called me up. You may recall they had a couple of crazy founders (Nir Shavit and Alex Matveev) who said, “Hey we’re doing a company, would you like to join?” One of the crazy ideas was to try to do inference over sparse models, and in a weird way some of the gradient compression stuff I had been doing could be applied or extended to compress weights during training.

Nir Shavit: This is what’s so interesting to me. We’re busy with the communication stuff, not so much in optimization of models, and then there’s a gradual switch that ends in you building one of the world’s top labs in optimization of machine learning.

Dan Alistarh: Some of the other top labs are in the same building you’re in right now at MIT, so I want to give everyone their due. But yes, at this point we have one of the top labs with respect to AI efficiency broadly and model compression in particular.

Efficiency through quantization and sparsity: a quick explainer

To make massive AI models practical for everyday enterprise use, researchers use two primary optimization techniques: Quantization and Sparsity.

Quantization is like reducing the “bit-depth” of the model’s data. By switching from complex 16-bit numbers to simpler 4-bit or even 1-bit formats, we shrink the model’s memory footprint, allowing it to run on standard hardware without losing its “intelligence.”

Sparsity takes this further by identifying and “turning off” the millions of connections within a model that don’t contribute to the final answer. If quantization is like lowering a photo’s resolution to save space, sparsity is like cropping out the empty background. Together, they allow users to deploy powerful AI on a fraction of the original budget.

Nir Shavit: So you decided to shift into sparsity and into quantization. Maybe say a few words about how we compress a model.

Dan Alistarh: The basic idea is you have these massive models with billions to even trillions of parameters being trained these days, and these completely overwhelm any traditional device, like a laptop. These sets of matrices are just too large—they would fill up your disk just downloading them. A lot of the startup compute used for serving models goes into doing multiplications over these massive matrices.

There are several levels at which you would like to remove this type of massive model bottleneck. A very reasonable one is to make the models smaller so you can download them, or just load them into memory more easily. That’s usually done via weight compression. We do compression pretty much every day with ZIP and other formats. The problem with using these standard approaches is that unzipping and unzipping something takes a long time because it looks for a very custom representation of the data. If you’re running on a GPU and you want to do inference, you want to decompress these matrices as fast as possible, so you need very custom and easy-to-decode formats.

One of our first contributions was to come up with a simple algorithm that can do something data aware but is still fast to decompress. That basic algorithm was GPTQ, which comes from GPT plus PTQ, or post-training quantization, where after you have a model trained you produce a compressed model that preserves the accuracy of the original. That’s basically the standard algorithm now.

Nir Shavit: And this is all open source?

We decided open source is the easiest way, especially for potential collaborations with academia.

Dan Alistarh: Yes. Initially at Neural Magic, we were wondering about the best way to make progress in this area, whether that’s open source or closed source. We did certainly have closed source stuff, but, at the end of the day, we decided open source is the easiest way, especially for potential collaborations with academia. There is a very powerful open source ecosystem, including, for instance, vLLM, llm-compressor, llama.ccp, and all of these compression algorithms. There’s a critical mass of companies contributing to it.

Nir Shavit: And this was not your only surprise when putting something into open source and suddenly seeing it take off. SparseGPT was very similar, right?

Dan Alistarh: Yeah, SparseGPT was really incredible in the way it took off. Over the course of time, probably GPTQ was by far the most influential–at some point I counted 55 million downloads for GPTQ models from Hugging Face.

The next frontier

Nir Shavit: What’s your perspective on how important these techniques are going to be in this new wave of LLMs and running them? Where do you think the bottlenecks are, and what are the tools we’re going to use to solve them?

Dan Alistarh: I’ll jump back to my explanation of compression formats. There’s the basic way of compressing, this ZIP-type algorithm, which allows you to compress the weights and make it easier to move them around. This allows you to manipulate them and move them into the GPU for token-by-token inference. In the single-user case, you have one big matrix being multiplied against a very small matrix.

If you have a lot of people using the LLM—and this is certainly the case for an engine such as vLLM, which is usually employed in a datacenter setup—or if a single person is sending a lot of larger requests or you’re in this prefill phase where a user’s prompt is being processed, this becomes a much more computationally intensive problem. Now you have two large matrices to be multiplied, and this process gets repeated on and on. You don’t benefit from compressing just one of the matrices; you have to compress both of them to get the computational benefits.

This is where weights and activations compression becomes very important. You need to compress both the static model state (the weights) and the dynamic model state (the activations). To go even deeper, if you’re working with an agent that has a very long task, its context becomes very large, and then you have to further compress some of the internal state. This is called the KV Cache, essentially the model’s short-term memory, which contains the entire context. This is all to say that there are different levels of compression, and the current major challenge is to produce accurate models where their entire state–weights, activations, KV Cache–are all compressed.

Nir Shavit: You’ve been working on quantization, getting it down to even one bit, right? Is the end of quantization coming soon and we’ll need to find something else to work on?

Dan Alistarh: I can give you a couple of guesses. One direction is people running powerful models locally. There are other shortcuts for this, for instance, just training a model that’s small to begin with via a process usually called distillation. That’s a very reasonable approach. There certainly is a current frontier to efficiency: you can look at the most efficient format that’s currently supported across, let’s say, NVIDIA and AMD GPUs, which is 4-bit weights and activations. It’s still an open question of how we can use this format efficiently for inference end to end. This is probably the biggest problem we’re tackling these days.

Nir Shavit: So four bits is the current frontier, but do you see us going down to two bits?

Dan Alistarh: Even if you could compress both weights and activations to two bits, it wouldn’t help that much, because there’s no hardware support for two bits or lower. There’s just no way of leveraging that for computation. For moving data, we proposed some work, and there’s other work out of Cornell that gets fairly accurate 2-bit presentations. The problem is the kernels you have to write can be extremely complex. Coming up with a scheme that’s efficiently decodable, simple for people to work with, and portable at 2-bit would be an interesting problem. But that’s somewhat niche. If you talk to developers, people really want larger batch efficiency: they want both weights and activations to be in the most efficient format possible.

I think sparsity may make a comeback at least in the medium term. We have data that is 32-bit, so you can express a lot of values. Then we go to 4-bit, which has 16 values you can represent. Then imagine you go to 2-bit—that’s literally four values, out of which one is useless because it’s not symmetrical. You can also do ternary—minus one, zero, and one—but once you get to a ternary format, a lot of your data is going to be zero anyway. My point is that, at a certain level of compression, sparsity has to appear. Once you compress low enough, even to 2-bit, zero is going to be an important part of your representation, so you might as well leverage the fact that multiplication with zero can be completely skipped.

The other thing is, if I give you the weights for Kimi K2— a massive, trillion-parameter open model—even if you were to compress it to one bit in an accurate way, without any sparsity, even if you could get the model from 1 trillion parameters to the equivalent of 1 trillion over 32 parameters, that’s still a large model that wouldn’t run on your laptop. And rumors are Claude and GPT5 and so on are significantly larger than 1 trillion. So you have to come up with a representation below this 1-bit per parameter threshold, because that’s what you need to get to a workable state.

Nir Shavit: How sparse can we make LLMs?

Dan Alistarh: One data point: with my former student Elias Frantar (now at OpenAI), we developed a special dictionary representation which, instead of compressing single elements and mapping them to zero or one, we’re compressing blocks. You compress blocks of, say, 64 elements at a time, and you see the individual code words tend to start repeating: some mapped elements from this dictionary are much more frequent than others. You can use standard Huffman-type theory to find the representation complexity of this compressed space. We showed that for trillion-scale transformers you can get to about 0.8 bits per parameter.

That’s probably where the next breakthrough in compression is going to come from.

Nir Shavit: And when you say 0.8 bits, that’s not taking into account that you might be way overparameterized in the model itself.

Dan Alistarh: In some ways it is, because if you look at your matrix values and they keep repeating, information theory tells you you should be able to compress these matrices to a high extent, and these matrices do have high redundancy.

Nir Shavit: I’m suggesting that perhaps in feature space, if I actually could look at what’s there, I would find it’s much sparser than what I’m doing in the weights themselves.

Dan Alistarh: Yes, I completely agree. That’s probably where the next breakthrough in compression is going to come from: understanding the complexity of the feature space and how to decompose that.

Nir Shavit: It’s kind of scary that we need to go a thousand times or more, but we don’t seem to have the tools to go there. Yet between your ears is a device with a quadrillion parameters. So the question is, what do we do?

Dan Alistarh: We’re still starting to understand the right tools or metrics for understanding what these matrices are doing. The current state-of-the-art methods essentially cut the model into layerwise pieces, then they think about it as a dynamical system or impulse-response type of scenario. You want to say, for this matrix that gets input of this kind and gives output of this kind, what’s the tiniest matrix I can create that has the same input-output response?

That’s how our GPTQ algorithm works, and what a lot of the people in this field are looking at. What you’re suggesting—trying to see things end to end— is more clever but much harder. It isn’t that we lack the framework to do it, it’s that the models got a thousand times larger overnight and all of this machinery has completely collapsed.

Nir Shavit: You don’t have the compute to do the compression.

Dan Alistarh: That’s certainly one reason. We have tried it: over a holiday break we took off half of our cluster and tested out some complex Fisher Information methods just to see what would happen. Unfortunately, these methods do not work out of the box for massive transformers.

Nir Shavit: If I had to ask you, right now, to throw out a number on how much you can actually compress the fully connected layers of a transformer, what would your guess be? The weight compression, that is.

Dan Alistarh: Ternary is certainly doable, and ternary to binary may be possible.

Nir Shavit: And in terms of sparsity, what percentage of the weights would you have to keep?

My guess is 90% of weights are probably useless.

Dan Alistarh: You’re asking me very hard questions, as always. I think you have been asking me variants of this question for the past five years continuously. But my guess is 90% of weights are probably useless.

Nir Shavit: So that’s a 10x speed up potentially. That’s not too bad. And when we’re done with all this, what do we do then?

Dan Alistarh: First, there are still interesting breakthroughs to be had in compression. Whether that’s going to bring in massive gains for the average person, I don’t know. But I’m interested in training directly in these formats: sparsifying and quantizing while training the model. One of our problems is we get these artifacts and then we need to compress them. But on the training side, could you do better if you plugged into the training and could you obtain better models? My guess is yes. I’ve been working on this for the past year and there’s definitely interesting stuff to be found.

Nir Shavit: What do you think about compressing activations—basically not computing on things that are zeros? For example, our models use complex activation functions like GELU (Gaussian Error Linear Unit) or SiLU (Sigmoid Linear Unit), but could we use a simpler function like ReLu (Rectified Linear Unit) for greater activation sparsity?

Dan Alistarh: One thing that is definitely doable is computing with low-precision weights and activations. The training processes we have right now are fairly robust for this. With respect to sparsity, this is an idea that’s been coming back roughly every two years. Certainly the successful models we’re seeing are more towards weight activation and activation quantization types of approaches.

Nir Shavit: So there’s all this stuff in the datacenters. What do you think is going to happen on our laptops or in robots?

Dan Alistarh: That’s why I’m talking about trying to train extremely capable small models. This is an area that needs more work, and there’s a lot of low-hanging fruit. But this is also an area where I don’t think we fully understand what’s happening. There’s a gap between what we see being trained in the open and what’s trained by the big labs. Getting a highly capable local model that can run a bunch of stuff locally—tasks running directly on your local data that you may not want to share—seems like a direction worth exploring.

There’s a gap between what we see being trained in the open and what’s trained by the big labs.

Having models that can at least dispatch or route certain decisions or tasks between local and cloud is also very interesting, and Red Hat is involved in projects on this as well. The idea is to have a hybrid setup where privacy-critical tasks are solved locally, maybe with a slight decrease in performance and accuracy. If you want higher-level reasoning or whatever the cloud has to offer, you can route requests to the cloud.

LLMs and the future of programming

Nir Shavit: Let’s go in a different direction. Your lab is developing all these high-performance kernels for quantization and sparsity and so on, and at the same time the whole programming world is moving to use LLMs to do programming. Do you believe LLMs will be able to write code and invent algorithms?

Dan Alistarh: It’s a good question, but I’m probably not the best person to give you a solid answer.

Nir Shavit: I think you are the person.

Dan Alistarh: I’m not claiming to give definitive answers here. Thankfully, through my lab and/or Red Hat, I have access to pretty much all the cutting edge tools and models, even though some of them are ridiculously expensive, and I play with them regularly. I think GPU programmers are going to be fine for at least a little while longer. More generally, I think performance programmers are going to be fine, because there is this beauty—which you understand very well, Nir—in building a really nice kernel. There’s some poetry to it, and machines are not able to do this properly yet.

There is this beauty in building a really nice kernel. There’s some poetry to it, and machines are not able to do this properly yet.

Nir Shavit: What do you think we as people bring into this that the machine can’t replicate?

Dan Alistarh: First, there’s intuition. If you work with students, you see that towards their fourth or fifth year of PhD they get an intuition for things. This system-level intuition is hard to get from a machine. As impressive as some of these tools are, I haven’t seen that kind of thing.

Nir Shavit: Are you saying it’s harder than the hard math problems machines are solving right now? You described this beautifully, saying there’s some poetry to it, so okay, what is it exactly that it’s going to be hard for LLMs?

Dan Alistarh: Right now, LLMs are very good at exploring the immediate frontier of knowledge, but I haven’t seen them go very deep—it’s more breadth than depth. They are definitely very useful, even for research use, but I’ve never seen them come up with something completely surprising or something my students wouldn’t be able to work out given sufficient thinking time.

Nir Shavit: You do see people like your students inventing surprising things?

Dan Alistarh: One would hope so! It does happen, usually at the point where they’re ready to graduate and one of the big frontier labs wants to hire them. I’m feeding the machine in some sense.

Nir Shavit: Final question: how do you see the role of academia relative to all this? You mention feeding great people to industry. What else?

Dan Alistarh: One thing I find particularly exciting about being in academia is you can take big risks: sometimes you fail, and it’s fine. Having the freedom to explore in depth, as opposed to just breadth, is a big deal.

Nir Shavit: That’s a problem with industry—you can’t do depth?

Dan Alistarh: To some extent: the very top researchers do depth and do it very well. It’s just that it’s closed, so you can’t see it anymore. And I imagine there must be quite a bit of pressure coming in from the business side. I like the position I’m in specifically because I can push results towards industrial or practical use. When I need to talk to people and ask how we can get something into vLLM, for instance, or how we can get it into LLM Compressor, I have excellent people one Slack message or Zoom call away.

At the same time, I don’t have the pressure of a GPT release next week. It’s an ideal scenario, and I’ve been able to produce useful things often enough that I don’t feel too guilty about enjoying it. It’s been a very fruitful collaboration. Getting gentle guidance from industry while still having enough freedom to try out more crazy stuff works really well, for my lab and for academia.

Nir Shavit: Thank you, it’s been a pleasure to talk to you.

SHARE THIS ARTICLE

More like this

Ask Gen AI to design a CIO action figure, and you might get a guy in a dark suit with a briefcase and laptop as accessories. That won’t give you an accurate idea of Boston University CIO Chris Sedore, who’s held the post at Syracuse University, University of Texas at Austin, and Tufts University. You […]

The name Luke Hinds is well known in the open source security community. During his time as Distinguished Engineer and Security Engineering Lead for the Office of the CTO Red Hat, he acted as a security advisor to multiple open source organizations, worked with MIT Lincoln Laboratory to build Keylime, and created Sigstore, a wildly […]

“How many lives am I impacting?” That’s the question that set Akash Srivastava, Founding Manager of the Red Hat AI Innovation Team, on a path to developing the end-to-end open source LLM customization project known as InstructLab. A principal investigator (PI) at the MIT-IBM Watson AI Lab since 2019, Akash has a long professional history […]

John Goodhue has perspective. He was there at the birth of the internet and the development of the BBN Butterfly supercomputer, and now he’s a leader in one of the toughest challenges of the current age of technology—sustainable computing. Comparisons abound: one report says carbon emissions from cloud computing equal or exceed emissions from all […]

What if there were an open source web-based computing platform that not only accelerates the time it takes to share and analyze life-saving radiological data, but also allows for collaborative and novel research on this data, all hosted on a public cloud to democratize access? In 2018, Red Hat and Boston Children’s Hospital announced a […]

Everyone has an opinion on misinformation and AI these days, but few are as qualified to share it as computer vision expert and technology ethicist Walter Scheirer. Scheirer is the Dennis O. Doughty Collegiate Associate Professor of Computer Science and Engineering at the University of Notre Dame and a faculty affiliate of Notre Dame’s Technology […]

What is the role of the technologist when building the future? According to Boston University professor Jonathan Appavoo, “We must enable flight, not create bonds!” Professor Appavoo began his career as a Research Staff Member at IBM before returning to academia and winning the National Science Foundation’s CAREER award, the most prestigious NSF award for […]