Advanced proactive caching for heterogeneous storage systems

This project targets improving the performance of distributed storage systems, such as Ceph and NooBaa, by developing novel caching frameworks that (1) take into account request heterogeneity, and (2) perform proactive caching decisions (a.k.a., speculative prefetching).

Caching is one of the most well-known and effective optimization techniques ubiquitous both within single-hosts and in large distributed systems. However, while a plethora of highly advanced and powerful caching algorithms has been developed and studied, the common approach in the industry still relies on relatively basic and generic policies such as LRU (Least Recently Used). These methods exhibit a number of drawbacks. First, they are non-adaptive, that is, unable to alter their behavior depending on the properties of the underlying workload. This means that a target application cannot benefit from a caching policy that is optimized towards its usage patterns. Second, currently available cache policies are reactive rather than proactive. Therefore, they cannot leverage various system-specific and workload-specific patterns for making caching decisions (insert and drop) in advance, i.e., prefetching certain items before they are accessed.

We expect our results to provide improved performance in a differentiated manner depending on the various characteristics of the workload such as the user making the request, the query complexity, the datastore(s) involved in fulfilling the query, and the network capacity. We further intend to exploit recurring access patterns to speculatively prefetch items into the cache.

The prime contributions of this project will be: (i) algorithmically, where we will study novel designs for caching in storage systems incorporating heterogeneity and proactivity, and (ii) system-level, where we intend to pursue upstreaming of our suggested solutions within the open-source community, most notably within the Ceph architecture, e.g., by extending the NooBaa framework. We expect our work to directly affect storage systems which will be using our extended framework. Furthermore, we expect our solutions to be applicable also to other systems making use of distributed storage, and potentially to further, and more general, caching-based environments.

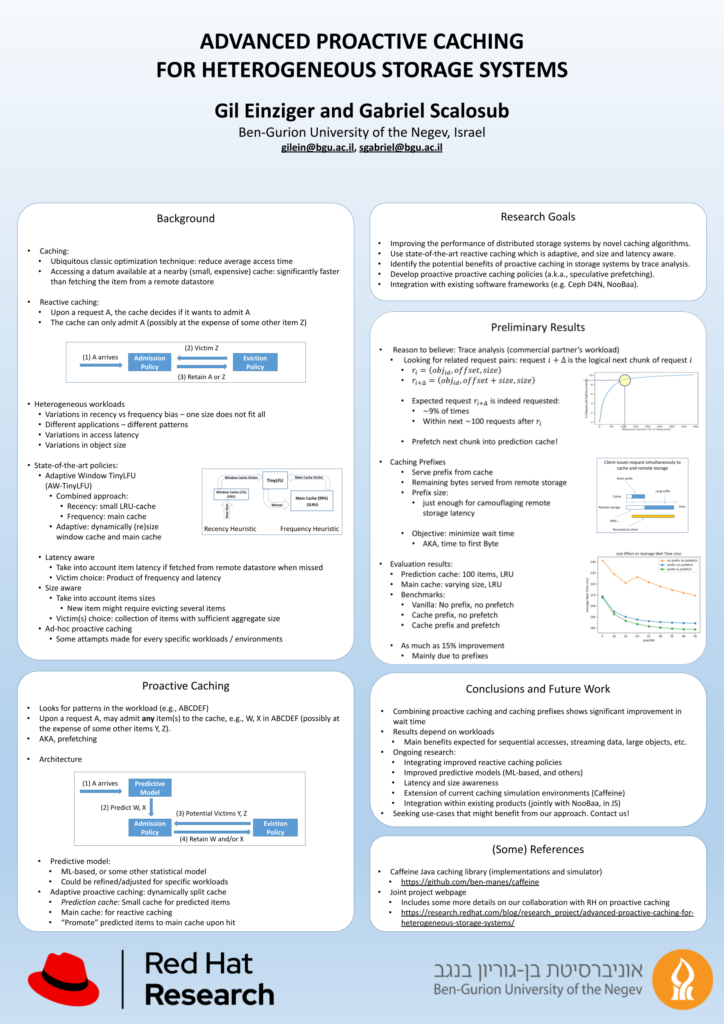

Project Poster

Link to full size project poster