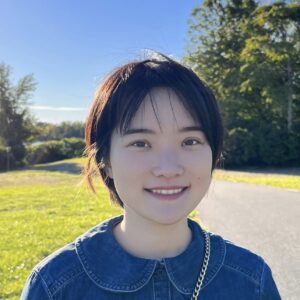

When the research interests that eventually coalesced into Red Hat Research first started, open hardware innovation was not a central feature on our roadmap. We asked Distinguished Engineer Ulrich Drepper and Senior Data Scientist (FPGAs) Ahmed Sanaullah to explain how and why that changed. Uli leads Red Hat’s research and future vision on artificial intelligence, machine learning, and hardware innovation, and he first wrote about hardware for RHRQ in “Hardware is back” (May 2020). Ahmed’s current focus is building open source tooling for FPGAs. He received his PhD from Boston University in 2019, and his dissertation—referenced below—won the Outstanding Computer Engineering Dissertation Award. He’s written about FPGAs for RHRQ in “Roll your own processor: building an open source toolchain for an FPGA” (Nov 2019) and “RISC-V for FPGAs: benefits and opportunities” (May 2022).

FPGAs and open hardware

When Red Hat Research first started working with Boston University, we were not planning on doing the kind of hardware work we are doing now. Our interests were more focused on what we could do in the realm of container deployment and acceleration. Ahmed proposed his research to Red Hat back in October 2016, and we were trying to use hardware based on FPGAs (field-programmable gate arrays) to do computation on the networking path for cloud nodes. By building support that would allow cloud tenants and providers to develop and deploy FPGA workloads, we were aiming to enable a number of projects, such as network packet processing, metering and telemetry, middleware offloads, and even high-performance computing (HPC) application acceleration such as molecular dynamics and machine learning.

Open source through the research lens

As RHRQ starts its fifth year it’s impossible to resist the temptation to look back at all we’ve done so far. The result is this collection of perspectives. Together they paint an inspiring picture of the innovative work that can be accomplished when engineering know-how and bold research questions come together in open source environments.

- Focus on clouds and research IT, Heidi Picher Dempsey and Gagan Kumar

- Focus on testing and operations, Daniel Bristot de Oliveira and Bandan Das

- Focus on security, privacy, and cryptography, Lily Sturmann

- Focus on AI and machine learning, Sanjay Arora and Marek Grác

- Focus on education, Sarah Coghlan and Matej Hrušovský

Our use of FPGAs for this was motivated by the additional flexibility, performance, and power/performance ratio FPGAs could give us. ASICs (application-specific integrated circuits) freeze you into a specific set of features that improves performance and efficiency at the cost of flexibility and upfront cost, while deploying such workloads on CPUs can result in greater flexibility at the cost of reduced efficiency. Moreover, running these workloads on a CPU also reduces resources that can be leased out to tenants. FPGAs, by contrast, basically give you CPU-like flexibility and ASIC-like performance by allowing you to reconfigure the hardware. This results in a persistent state of innovation where you can modify your workloads and consistently update things, all while reducing power requirements and freeing up other datacenter resources.

The problem with FPGAs, specifically, is that the tooling today depends on proprietary software that is also probably 30 years behind the rest of the software world. There was simply never the competition needed to drive improvement in how these tools could be used. Having these tools as part of a larger infrastructure—one where you can press a button and it generates everything in a way that a software developer can understand—was simply not possible. That’s why we started to look into the idea of building out systems and specifying applications or supporting application deployment on custom hardware in a way that is useful, not a one-shot design that only you can use and nobody else can benefit from. We founded this effort on Ahmed’s thesis work, which explored building infrastructure around an FPGA to support deploying applications in a standard way. Done properly, this infrastructure will permit the FPGA to be a kind of offload engine in a way that is transparent to the host. All these ideas taken together were the genesis of our hardware research.

Our intention was always that the deployment and application development could be done by someone not steeped in the world of hardware.

To actually achieve this, we had to start from the bottom. There were many open source projects we could piggyback on to do some of these things, but we also needed the building blocks to interface with the system users and also the host system, which we had to build up. You need to communicate from the host system with the FPGA—for instance, through PCIe (Peripheral Component Interconnect-express), through USB, through Wi-Fi—and we are building all these blocks out. Our intention was always that the deployment and application development could be done by someone not steeped in the world of hardware. We are building the tooling to transform inputs from regular software developers, in an easily understandable way, into the appropriate configuration files for all the involved tools without losing any of the efficiencies the low-level tools are granting us.

Where we, as Red Hat Research, can have the most impact is in the area of edge devices. I mean real edge devices—not edge compute at the cell tower, but something that is living next to your machine control system or doing some monitoring at an entry system. These systems are going to be doing more of the work they are supposed to do locally, in a more intelligent way, but in a resource-constrained way. For instance, say you have a door-entry system that takes a photo, sends it back to a server, does image analysis there, and then sends a signal back. What if, instead, we can deploy the models on an FPGA, which is in the door-entry system itself, and because we are programming it in a highly efficient way, it can make the decisions locally?

This type of system is going to be extremely important, but we can’t possibly do it in the current ecosystem of proprietary clouds. Companies like Amazon, Google, and Microsoft provide similar functionality, but then you are exclusively tied into their services. We have to provide an ecosystem outside that.

Driven by openness

What we’re doing is not so much providing a solution to something as really working on infrastructure and tools the way they should have been designed from day one. The need for an open ecosystem apart from the walled gardens of the proprietary cloud vendors compels us to take a new bottom-up approach toward a completely changeable platform. Once we accomplish that, we’ll have a world where more innovation is possible, and devices are working better with less centralization, so every single device out there doesn’t have to phone back to a set of datacenters to make a decision.

Every single day there are more devices out there to do different things. For example, in a monitoring system, you’re always adding things, like a new sensor. When you want to do something different, you might have to design a new system. But with an FPGA, you have the flexibility to keep the same system and just connect a couple of wires differently on the board, connect the different sensor, and reprogram. You can make it work—and do it with a lot less energy consumption than even the microcontroller can afford you.

It has also helped that over the last five years it’s become evident to more people that FPGAs are not merely implementation and testing devices for a later ASIC design. Now they are recognized as deployments for applications. That was really a prerequisite for this work, that people realize FPGAs are something the general public should be able to utilize by themselves. This started with the first toolchains to program FPGAs to become fully open source, which happened in only the last ten years.

Moving toward mass adoption

Looking ahead, we want to advance the state of specialized hardware, such as FPGAs, in a manner that maximizes its practical value to the community. This will require research towards reducing hardware development overheads to match those of software development, identifying and providing mechanisms for software stacks to leverage specialized hardware, and exploring novel use cases and hardware deployment configurations. To enable and accelerate this research, we are driving an on-premise co-design research lab called CoDes. This lab co-locates hardware, software, researchers, and engineers in a unique collaborative space focused on supporting a completely configured, drivable deployment of arbitrary applications on devices that encompass FPGAs but can also have microcontrollers, sensors, and so on. In the longer term, we are also working on problems that CPU technologies and even GPU technologies are not well suited for.

We are also working on various techniques around confidential computing with BU. So using some of the abilities of FPGAs – for instance, direct communication between multiples of them – you can implement multiparty computation (MPC) that goes much faster than it would on traditional hardware, without the interference of an operating system. If you imagine that you have two applications running that are implementing tasks like MPC, and they have high communication needs, you can cut out any kind of latency if your application talks through a wire directly to the neighboring application. We’ve already demonstrated this, even on the software side, where we have cut out a normal communications line. Similarly, in the Unikernel Linux work we can already achieve dramatic speed-ups. With FPGAs, we expect to achieve even better results. Our hope is that the tools we are building can become widely available and achieve mass adoption.