Boston University

Our flagship partnership, Boston University is the center of two major Red Hat initiatives: The Red Hat Collaboratory at Boston University, and the Mass Open Cloud. Both initiatives are dedicated to the idea that marrying research to the open source development methodology, using a partnership between scientists and open source developers, is a uniquely fast and fruitful way of putting great ideas into practice. Boston University is also the center of our Boston intern program, which generates more and more new Red Hatters with each passing year.

News

BU-Red Hat vulnerability research recognized at IEEE hardware hacking competition

"Attacking cloud systems using passed-through PCIe devices," a project funded through the 2025 Red Hat Collaboratory Research Incubation Award, earned second place at the inaugural hardware hacking competition at the IEEE International Symposium on Hardware Oriented...

Red Hat welcomes BU prof Orran Krieger to lead AI platform initiative

Red Hat Research has periodically been fortunate to have faculty from Boston-area universities spend their sabbaticals working with our team. We’re happy to announce that in June 2024 we took this relationship to the next level by welcoming Boston University professor...

Machine learning enhanced high-level synthesis research wins best paper at Quality Electronic Design conference

“AutoAnnotate: Reinforcement-learning-based code annotation for high-level synthesis,” a paper resulting from a project at the Red Hat Collaboratory at Boston University, was selected as one of four Best Papers at the 25th International Symposium on Quality Electronic...

How research is driving AI at the edge, smart cities, and RISC-V at the 2024 Red Hat Summit

Planning to attend the 2024 Red Hat Summit, May 6-9, in Denver, CO? Topics related to Red Hat Research, the MOC Alliance, New England Research Cloud, and the Red Hat Collaboratory at Boston University will be featured in several presentations on popular subjects like...

Red Hat Research partner MOC Alliance announces 2024 workshop program including focus on AI and the AI Alliance

Updated on February 20, 2024. This article was originally posted January 20, 2024. The MOC Alliance annual workshop will be held February 28-29, 2024 at the George Sherman Union, 774 Commonwealth Ave., Boston, with featured topics including the newly launched AI...

Research in AI, hybrid cloud, edge, and data privacy wins Red Hat Collaboratory awards

The Red Hat Collaboratory at Boston University has announced the recipients of its 2024 Research Incubation Awards. Twelve new and renewed research projects received nearly $2 million in funding to support research in topics ranging from reconfigurable hardware to...

AI Alliance launches to advance open, safe, responsible AI

Red Hat Research is delighted by the potential for new opportunities suggested by the launch of the AI Alliance, which brings leading organizations across industry, academia, research, and government together to foster an open community. Through its partnership with...

AI product strategies and research topics highlighted at Red Hat Colloquium

AI technology is developing so quickly that by the time an enterprise implements a solution, it can easily be out of date. How do you know whether you’re pursuing a sound long-term strategy or just chasing the next shiny thing? In addition, the massive scale of AI...

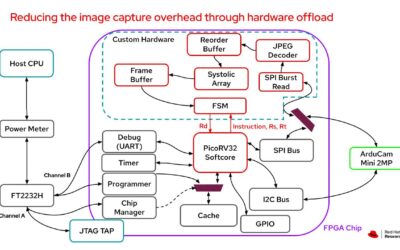

Dynamic Infrastructure Services Layer: Putting the benefits of programmable hardware within reach

The pressure on developers to optimize applications for speed, performance, and efficiency keeps growing. Imagine if, instead of just modifying source code or linking in more efficient libraries, you could also customize your hardware stack to meet your specific...

Research in AI for automotive and distributed systems wins recognition

Papers from two projects based at the Red Hat Collaboratory at Boston University have been recognized for excellence at preeminent research conferences. Both projects were recipients of the Red Hat Collaboratory 2022 and 2023 Research Incubation Awards. Details about...

Related Projects

Title Summary Research Area Mass Open Cloud (MOC): An open, distributed platform enabling AI/ML workloads Red Hat has for many years participated in and supported the Mass Open Cloud Alliance (MOC-A). With the rising importance … AI-ML, Cloud-DS, Hardware and the OS, Security, Privacy, Cryptography ChRIS Research Integration Service ChRIS (ChRIS Research Integration Service) is an infrastructure that initially started as an open source research project at the Boston … Optimizing Kernel Paths for Performance and Energy The growing size of modern OSes such as Linux is well documented and likely exacerbated as more features are packed … Discovering Opportunities for Optimizing OpenShift Energy Consumption AbstractDrawing from our collective experience, we believe a wide array of opportunities for implementing energy optimization exist within OpenShift. However, … AI-ML, Testing and Ops Lock ’n Load: Deadlock Detection in Binary-only Kernel Modules Two shortcomings in the Linux kernel/module security analysis landscape motivate this research. First, existing security analyses focus mainly on detecting … HySe – Hypervisor Security through Component-Wise Fuzzing The security of the entire cloud ecosystem crucially depends on the isolation guarantees that hypervisors provide between guest VMs and … Security, Privacy, Cryptography CoFHE: Compiler for Fully Homomorphic Encryption In today’s data-driven world, our personal data is frequently shared with enterprises and cloud service providers. Unfortunately, data processing in … Cloud-DS, Hardware and the OS, Security, Privacy, Cryptography Curator Operator Curator is an air-gapped infrastructure consumption analysis tool for the Red Hat OpenShift Container Platform. The curator retrieves infrastructure … Improving Cyber Security Operations using Knowledge Graphs AbstractThe objective of this project is to improve the workflow and performance of security operation centers, including automating several of … AI-ML, Cloud-DS, Testing and Ops Minimal Mobile Systems via Cloud-based Adaptive Task Processing The high cost of robots today has hindered their widespread use. Specifically, a limiting factor involves extensive hardware and software … AI-ML, Cloud-DS Co-Ops: Collaborative Open Source and Privacy-Preserving Training for Learning to Drive Note: This project is a continuation of OSMOSIS: Open-Source Multi-Organizational Collaborative Training for Societal-Scale AI Systems. AbstractCurrent development of autonomous … AI-ML, Cloud-DS CoDes : A co-design research lab to advance specialized hardware projects CoDes research lab provides the infrastructure and engineering foundation needed to support co-design based specialized hardware research. The lab is currently located at Boston University, as part of the Red Hat – Boston University collaboratory. AI-ML, Cloud-DS, Hardware and the OS Prototyping a Distributed, Asynchronous Workflow for Iterative Near-Term Ecological Forecasting AbstractThe ongoing data revolution has begun to fuel the growth of near-term iterative ecological forecasts: continually-updated predictions about the future … FHELib: Fully Homomorphic Encryption Hardware Library for Privacy-preserving Computing Note: Please visit the Privacy-Preserving Cloud Computing using Homomorphic Encryption project page for information on a related project. In today’s … Cloud-DS, Hardware and the OS, Security, Privacy, Cryptography SECURE-ED: Open-Source Infrastructure for Student Learning Disability Identification and Treatment The project aims to develop an infrastructure that would enable users to input data about an individual student and receive … Relational Memory Controller Note: See the Near-Data Data Transformation project page for information about the work that led to this project. Abstract: Data movement … Learned Cost-Models for Robust Tuning Note: Please see the Robust Data Systems Tuning project page for earlier results associated with this research. Abstract: Data systems’ performance is … Tuning the Linux kernel The Linux kernel is a complicated piece of software with multiple components interacting with each other in complex ways. The … AI-ML, Hardware and the OS AI for Cloud Ops This project aims to address this gap in effective cloud management and operations with a concerted, systematic approach to building and integrating AI-driven software analytics into production systems. We aim to provide a rich selection of heavily-automated “ops” functionality as well as intuitive, easily-accessible analytics to users, developers, and administrators AI-ML, Cloud-DS, Hardware and the OS Creating a global open research platform to better understand social sustainability using data from a real-life smart village A BU team is working with SmartaByar and the Red Hat Social Innovation Program in order to create a global … AI-ML, Cloud-DS, Security, Privacy, Cryptography DISL: A Dynamic Infrastructure Services Layer for Reconfigurable Hardware Open programmable hardware offers tremendous opportunities for increased innovation, lower cost, greater flexibility, and customization in systems we can now … Cloud-DS, Hardware and the OS Practical Programming of FPGAs with Open Source Tools This project has evolved from the Practical programming of FPGAs in the data center and on the edge project. Please see … Cloud-DS, Hardware and the OS Near-Data Data Transformation BU faculty members Manos Athanassoulis and Renato Mancuso will work with Red Hat researchers Uli Drepper and Ahmed Sanaullah to create a hardware-software co-design paradigm for data systems that implements near-memory processing. Cloud-DS, Hardware and the OS Towards high performance and energy efficiency in open-source stream processing. BU faculty members Vasiliki Kalavari and Jonathan Appavoo will work with Red Hat researcher Sanjay Arora to create an open-source … Hardware and the OS OSMOSIS: Open-Source Multi-Organizational Collaborative Training for Societal-Scale AI Systems The goal of our project is to develop a novel framework and cloud-based implementation for facilitating collaboration among highly heterogeneous research, development, and educational settings. AI-ML, Cloud-DS Privacy-Preserving Cloud Computing using Homomorphic Encryption Note: Please visit the FHELib: Fully Homomorphic Encryption Hardware Library for Privacy-preserving Computing project page for information on a related … Cloud-DS, Hardware and the OS, Security, Privacy, Cryptography Serverless Streaming Graph Analytics In this project, we will focus on graph streams that can be used to model distributed systems, where workers are represented as nodes connected with edges that denote communication or dependencies. Cloud-DS, Testing and Ops Enabling Intelligent In-Network Computing for Cloud Systems With the network infrastructure becoming highly programmable, it is time to rethink the role of networks in the cloud computing … Cloud-DS, Testing and Ops Linux Computational Caching In this speculative work we are attempting to explore a biologically motivated conjecture on how memory of past computing can be stored and recalled to automatically improve a system’s behavior. AI-ML, Cloud-DS, Hardware and the OS The Open Education Project (OPE) In this project we are developing an exemplar set of materials for an introductory computers systems class that exploits, Jupyter, Jupyter Books, OpenShift and the the Mass Open Cloud to develop and deliver a unique educational experience for learning about how computer systems work. AI-ML, Cloud-DS Symbiotes: A New step in Linux’s Evolution This work explores how a new kind of software entity, a symbiotie, might bridge this gap. By adding the ability for application software to shed the boundary that separates it from the OS kernel it is free to integrate, modify and evolve in to a hybrid that is both application and OS. Hardware and the OS, Security, Privacy, Cryptography Intelligent Data Synchronization for Hybrid Clouds The goal of this project is to design configurable synchronization solutions on a common platform for a wide range of edge computing scenarios relevant to Red Hat. These solutions will be thoroughly validated on a state-of-the-art testbed capable of emulating realistic environments (e.g., smart cities). AI-ML, Cloud-DS, Testing and Ops Secure cross-site analytics on OpenShift logs The project aims to explore whether cryptographically secure Multi-Party Computation, or MPC for short, can be used to perform secure cross-site analytics on OpenShift logs with minimum client participation. Cloud-DS, Security, Privacy, Cryptography, Testing and Ops Robust Data Systems Tuning Note: Please see the Learned Cost-Models for Robust Tuning project page for research that has grown from this project. See … AI-ML, Hardware and the OS Robust LSM-Trees Under Workload Uncertainty We introduce a new robust tuning paradigm to aid in the design of data systems with uncertain assumptions by modeling the behavior of the system and then utilizing these models in conjunction with techniques in robust optimization. Our approach is demonstrated through tuning a popular log-structured merge-tree based storage engine, RocksDB Hardware and the OS Does efficient, private, agnostic learning imply efficient, agnostic online learning? Users of online services today must trust platforms with their personal data. Platforms can choose to enable privacy by default … Are Adversarial Attacks a Viable Solution to Individual Privacy? Users of online services today must trust platforms with their personal data. Platforms can choose to enable privacy by default … Security, Privacy, Cryptography Hybrid Cloud Caching A fundamental goal of the Hybrid Cloud Cache project is to allow simplified integration into existing data lakes, to enable caching to be transparently introduced into hybrid cloud computation, to support efficient caching of objects widely shared across clusters deployed by different organizations, and to avoid the complexity of managing a separate caching service on top of the data lake Volume Storage Over Object Storage This project creates a hybrid storage system composed of a high-speed local device (e.g. Optane) to store short term data, along with a write-once object store (e.g, Ceph RGW) to store data blocks permanently. Cloud-DS Elastic Secure Infrastructure This project encompasses work in several areas to design, build and evaluate secure bare-metal elastic infrastructure for data centers. Cloud-DS, Security, Privacy, Cryptography, Testing and Ops Open Cloud Testbed The Open Cloud Testbed project will build and support a testbed for research and experimentation into new cloud platforms – the underlying software which provides cloud services to applications. Testbeds such as OCT are critical for enabling research into new cloud technologies – research that requires experiments which potentially change the operation of the cloud itself. AI-ML, Cloud-DS, Hardware and the OS, Security, Privacy, Cryptography, Testing and Ops Kernel Techniques to Optimize Memory Bandwidth with Predictable Latency Recent processors have started introducing the first mechanism to monitor and control memory bandwidth. Can we use these mechanisms to enable machines to be fully used while ensuring that primary workloads have deterministic performance? This project presents early results from using Intel’s Resource Director Technology and some insight into this new hardware support. The project also examines an algorithm using these tools to provide deterministic performance on different workloads. Hardware and the OS Unikernel Linux This project aims to turn the Linux kernel into a unikernel with the following characteristics: 1) are easily compiled for any application, 2) use battle-tested, production Linux and glibc code, 3) allow the entire upstream Linux developer community to maintain and develop the code, and 4) provide applications normally running vanilla Linux to benefit from unikernel performance and security advantages. Hardware and the OS Fuzzing Device Emulation in QEMU Hypervisors—the software that allows a computer to simulate multiple virtual computers—form the backbone of cloud computing. Because they are both ubiquitous and essential, they are security-critical applications that make attractive targets for potential attackers. Hardware and the OS, Security, Privacy, Cryptography, Testing and Ops Automatic Configuration of Complex Hardware In this project, we pursue three goals towards this understanding: 1) identify, via a set of microbenchmarks, application characteristics that will illuminate mappings between hardware register values and their corresponding microbenchmark performance impact, 2) use these mappings to frame NIC configuration as a set of learning problems such that an automated system can recommend hardware settings corresponding to each network application, and 3) introduce either new dynamic or application instrumented policy into the device driver in order to better attune dynamic hardware configuration to application runtime behavior. Hardware and the OS Quest-V, a Partitioning Hypervisor for Latency-Sensitive Workloads Quest-V is a separation kernel that partitions services of different criticality levels across separate virtual machines, or sandboxes. Each sandbox encapsulates a subset of machine physical resources that it manages without requiring intervention from a hypervisor. In Quest-V, a hypervisor is only needed to bootstrap the system, recover from certain faults, and establish communication channels between sandboxes. Hardware and the OS Performance Management for Serverless Computing Serverless computing provides developers the freedom to build and deploy applications without worrying about infrastructure. Resources (memory, cpu, location) specified … Cloud-DS